A foundational primer for the executive leading forward-thinking firms in the new, AI-driven operating environment. (Photo by NordWood Themes on Unsplash)

Enterprise AI is reshaping operating models, capital allocation, and competitive advantage. Leaders who can evaluate AI investments, assess risk, and distinguish between machine learning, LLMs, and AI agents will drive smarter AI strategy and sustainable growth.

Non-technical executive? This foundational AI primer will position you to lead the forward-thinking firm.

The Origins: Machine Learning & Data Science

AI is only as good as the data and decisions that shape it.

Before ChatGPT, there was machine learning. Machine Learning (ML) emerged from statistics and computer science. Instead of explicitly programming rules (“if X, then Y”), ML systems learn patterns from historical data.

- Data Science organizes, cleans, and structures data.

- Machine Learning uses that data to identify patterns and generate predictions.

- AI is the broader umbrella that includes ML and other techniques.

Machine Learning use cases may look like:

- Forecast operating spend for the next quarter or year

- Predict churn – in product, customers, or employee attrition

- Detect fraudulent activity in sales or internal processes

- Optimize supply chains to reduce or eliminate waste

The above examples are classic Machine Learning use cases. They use historical data to recognize patterns and predict what likely come next. As an executive, limiting factor of ML to keep in mind include a) the quality of its outcomes are largely dependent on the data sources it trains the models on, and b) building and maintaining these models requires a data scientist to build and govern that model over time.

Why data and Machine Learning matters for executives

Machine Learning outcomes (more so than other AI) reflect historical data. When done right, Machine Learning creates powerful associations that can create new revenue opportunities or reduce firm risk where humans alone cannot see those complex pattern. That said, if your data is fragmented, biased, or poorly governed, your Machine learning outcomes will amplify those flaws. Data management platforms like Snowflake, Databricks,* and others have grown exponentially in the past several years as companies invest more heavily in data management and governance to get the most out of their enterprise data and AI.

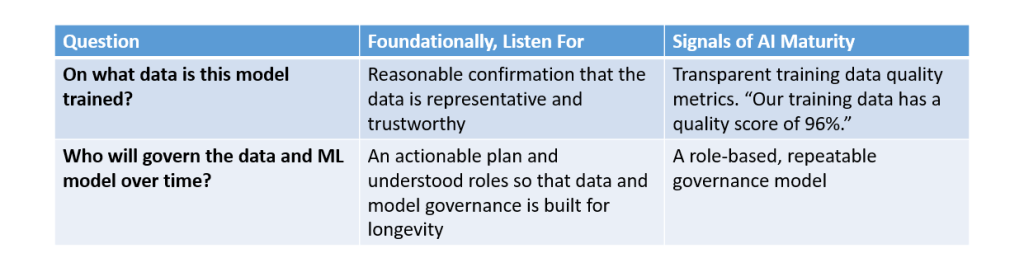

Executive questions to evaluate the maturity of your firm’s data readiness for AI:

AI maturity begins long before generative tools. It begins with disciplined data strategy. By asking questions about data and AI governance, you are enforcing a culture of data discipline that buffers your firm from avoidable AI risk.

The Rise of LLMs & RAG

Large Language Models generate language. They don’t inherently “know” your business – but they can certainly accelerate it with thoughtful guidance and governance.

The recent explosion of (generative) enterprise AI is driven by Large Language Models (LLMs), models trained on massive amounts of text data to predict the next word in a sequence. That simple mechanism allows them to summarize, draft, brainstorm, and reason across language. This natural language processing (you may sometimes hear short-hand for this term, “NLP”) opened up direct artificial intelligence access to people without requiring a data science background. Examples include GPT-style models and others built on transformer architectures.

LLMs are powerful because they translate across domains, synthesize complex information, generate structured output, and accelerate knowledge work. But they have a limitation on their own in that they do not automatically have access to your proprietary data. That’s where Retrieval-Augmented Generation (RAG) comes in.

RAG systems:

- Retrieve relevant internal documents or data

- Feed that information into the LLM

- Generate grounded, context-aware outputs

Combining LLMs and RAG gets you answers anchored in your policies, contracts, financial data, and/or your internal knowledge base, depending on what your firm chooses to share. RAG transforms large language model driven AI from a clever assistant into a strategic asset.

LLM and RAG use cases may look like:

- Analyze a long contract to summarize, identify themes, and points of interest that can be read and responded to in a quarter of the time.

- Generate an idea brainstorm to help you solve a complex problem, like “Given what you know of my firm, as an executive what can I do to directly accelerate revenue in the next quarter?”

- Create communications and content, both at a firm-wide and personal level.

- Personalize on-boarding of new employees by creating tailored material and on-boarding guides for new employees

- Rapid Prototyping of novel concepts and products with reduced spend

Why LLMs and RAG matters for executives

LLM use is critical to stay competitive in a rapidly changing business environment

There is no question that generative enterprise AI, powered by LLMs and RAG, is as impactful to the way we do business as the rise of the calculator or the personal computer in earlier generations of technology. Enterprise adoption of generative AI is already driving significant productivity gains (up to 43%1), reducing operational friction, and enabling rapid, data-driven decision-making. They act as strategic partners that synthesize complex data, generate tailored content, and automate workflows, allowing leaders to shift their staff and personal focus to high-impact strategic goals rather than manual tasks. As a leader, you cannot ignore this trend. Either your firm adopts AI in a meaningful way to inject new value into your operating model, or you will watch your competitors outpace you and take over marketshare.

Protecting your firm, clients, and shareholders from AI risk

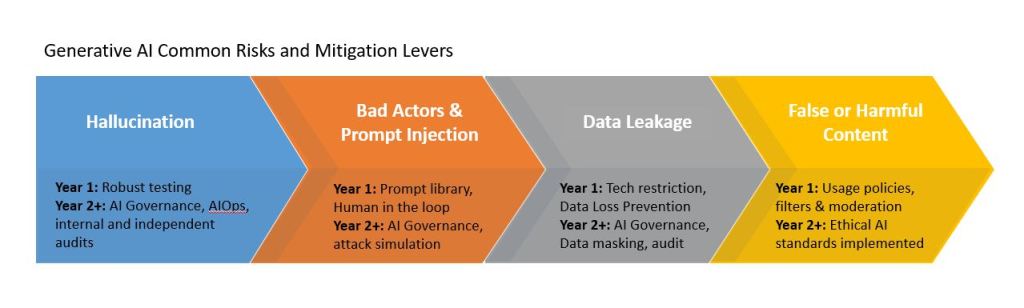

A critical role for firm leaders is to assess opportunities vs. risk and the threshold for firm risk tolerance. By understanding the most common risks associated with generative AI you can probe for mitigation tactics and shape AI governance at your firm.

Risks associated with generative enterprise AI include, but are not exclusive to:

- Hallucination, where the AI makes up information that sounds credible to satisfy the prompt, but in actuality this information is not factual.

- Bad actors and prompt injection attacks designed to take advantage of learning intelligence to create vulnerabilities and firm risk through unauthorized access, disclosure of sensitive data, and potential to spread misinformation.

- Data leakage, revealing high-value, actionable data intelligence that lowers the barrier to entry for complex attacks like deepfakes and phishing scams, theft of intellectual property (IP), and potential for firm loss of trust with client data handling and errors and omissions (E&O).

- Generation of false or harmful content, exposing underlying biases that harm the reputation of your firm and relationship with your customers.

In the short term, robust testing prior to and in the first phases of an AI product launch are critical to reduce risk associated with AI. Similarly, long term as a leader it is important to understand the operational impacts of launching an AI solution and a transparent understanding of the total cost of ownership (TCO) of an AI solution – from build to maintenance. Total cost of ownership in year one and ongoing years gives you as a leader the ability to gauge return on investment and level or organizational commitment to maintain the model over time (often called AIOps and/or AI governance). A robust operational AI infrastructure dramatically reduces the AI model risk profile over time. Understanding AI-associated risks as a leader, and up-skilling teams to account for risk scenarios when implementing LLMs to drive value, is a significant consideration when applying generative AI in your firm.

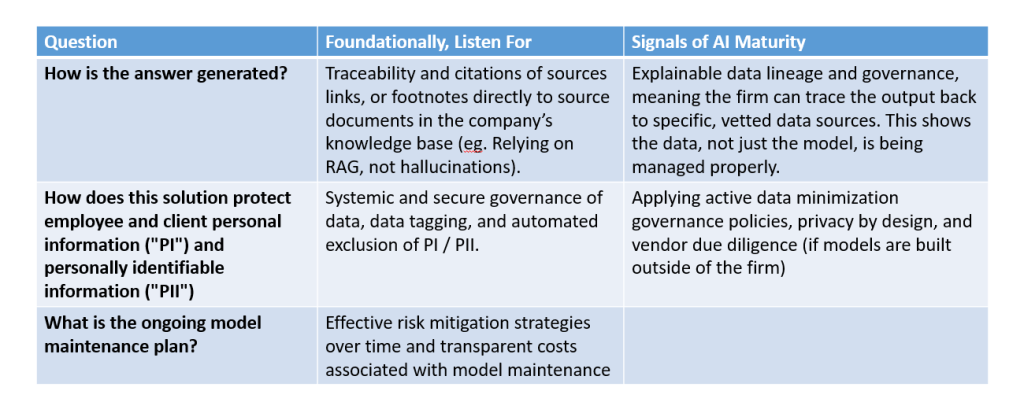

The leap from “AI experiment” to “AI advantage” happens when models integrate enterprise data safely and reliably.

Executive questions to evaluate the maturity of your firm’s data readiness for generative AI LLMs and RAG:

AI Agents & Agentic Systems

Agents don’t just answer questions — they take action.

As we have covered above, historically, enterprise AI has evolved in waves: first, predictive models embedded in analytics platforms, then generative systems like LLMs that could produce language on demand. Retrieval-Augmented Generation (RAG) marked the first major step toward grounding those models in enterprise reality, connecting probabilistic language engines to trusted internal data. Agentic AI builds on that progression by moving from “generate an answer” to “achieve an objective,” orchestrating multiple model calls, data retrieval steps, and tool integrations in sequence. We are now in the early adoption phase of this shift — similar to where cloud computing stood in the late 2000s — with leading organizations piloting agents in contained workflows while governance, reliability, and operating models rapidly mature.

Traditional generative AI responds to prompts. Agentic AI pursues goals.

A singular AI agent is a system that:

- Receives an objective. “Pay this monthly bill from XYZ”

- Breaks it into sub-tasks “(1) Validate funds are available, (2) Call API, (3) transfer funds, (4) trigger email confirmation of bill paid.”

- Uses tools or APIs

- Iterates toward completion

Here is another, more complex example:

“Prepare a board-ready competitive analysis.”

An agent could:

- Pull recent earnings reports

- Summarize key insights

- Analyze sentiment

- Generate slides

- Recommend strategic positioning

All with minimal human orchestration.

Agentic systems take this one step further by combining:

- LLM reasoning

- Data retrieval

- Workflow automation

- Tool integration

The result? AI that operates within your processes, not just beside them. The ability to automate intelligent workflow through “agents” truly represents the next wave of potential value generation from generative AI for executives and their teams.

Why Agentic AI matters for executives:

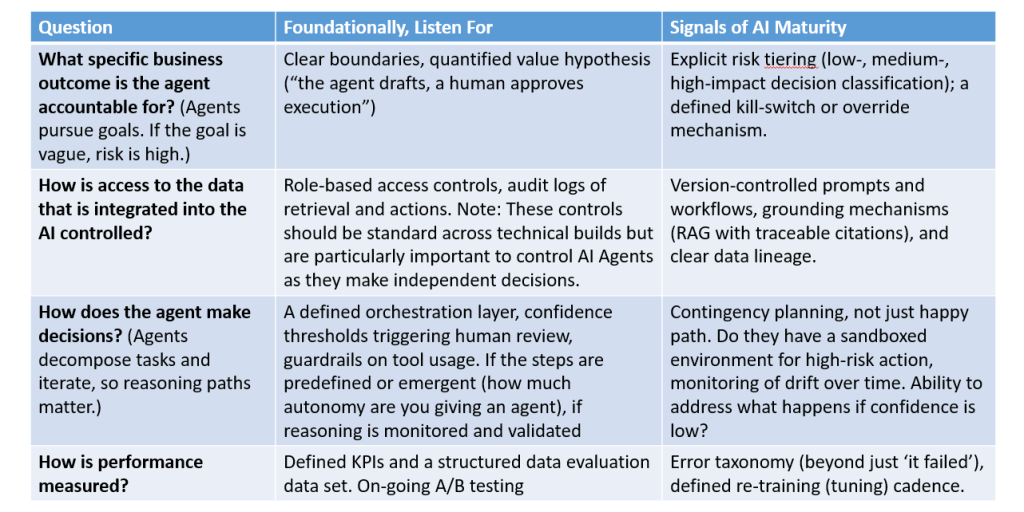

The competitive edge will not come from using AI to write emails faster. It will come from redesigning workflows around AI-enabled autonomy. That said, at the agentic stage, the risk shifts from “interesting experiment” to operational exposure. Think of this as the high reward / high risk option and treat your evaluation of agentic AI initiatives with care. Agentic AI changes work design itself and so it is imperative that there are appropriate human controls and fail safes to protect the firm and its customers from unwanted or even potentially harmful agentic decision making. When teams request funding for AI agents, executives should not evaluate the demo — they should evaluate the maturity behind the demo.

Executive questions to evaluate the maturity of your firm’s data readiness for AI Agents and Agentic Systems:

In Closing: Leadership in the age of intelligent systems

Enterprise AI is no longer a future-state discussion. It is an operating reality.

Machine learning taught us that data discipline determines predictive power. LLMs and RAG demonstrated that knowledge work can be accelerated and reshaped at scale. Agentic systems now signal the next frontier — not just insight generation, but intelligent execution embedded directly into enterprise workflows.

The leaders who will define the next decade are not those who merely deploy AI tools. They are the ones who understand the architecture beneath them, who ask disciplined questions about governance and risk, and who redesign work intentionally around intelligent systems. AI literacy at the executive level is becoming as fundamental as financial literacy or digital fluency.

This is not about becoming technical. It is about becoming strategically conversant — understanding enough to allocate capital wisely, protect enterprise value, and ensure AI is implemented in service of durable competitive advantage.

Forward-thinking firms will treat AI not as a project, but as infrastructure. Not as an experiment, but as a capability. Not as a cost center, but as a multiplier of human judgment, speed, and strategic clarity.

The mandate for today’s executives is clear:

- Lead the adoption with intention.

- Invest with discipline.

- Govern with rigor.

- Re-design your organization to thrive alongside intelligent systems.

Enterprise AI will not replace leadership.

But leadership that understands Enterprise AI will outperform those who do not.

1 Conflict of Interest (COI) Disclosure: the author is professionally associated with Databricks and Snowflake. The author is in no way compensated by either company for their mention or recommendation in this article.

2 McKinsey (2023): The Economic Potential of Generative AI The Next Productivity Frontier https://www.mckinsey.com/capabilities/tech-and-ai/our-insights/the-economic-potential-of-generative-ai-the-next-productivity-frontier